Recently I was tasked with deploying and operating a Symfony application on Kubernetes. Since PHP is not my primary area of work anymore, I was hoping to find some up to date guide and/or best practices about the topic, but sadly that wasn’t the case at all, so I decided to write about the process of containerizing the application, building up the infrastructure and deploying it on Kubernetes.

Since this is a relatively huge topic, I decided to break it up into smaller posts. In this part you can read about containerizing a Symfony application.

If you are curious about the end result, you can find it on Gitlab.

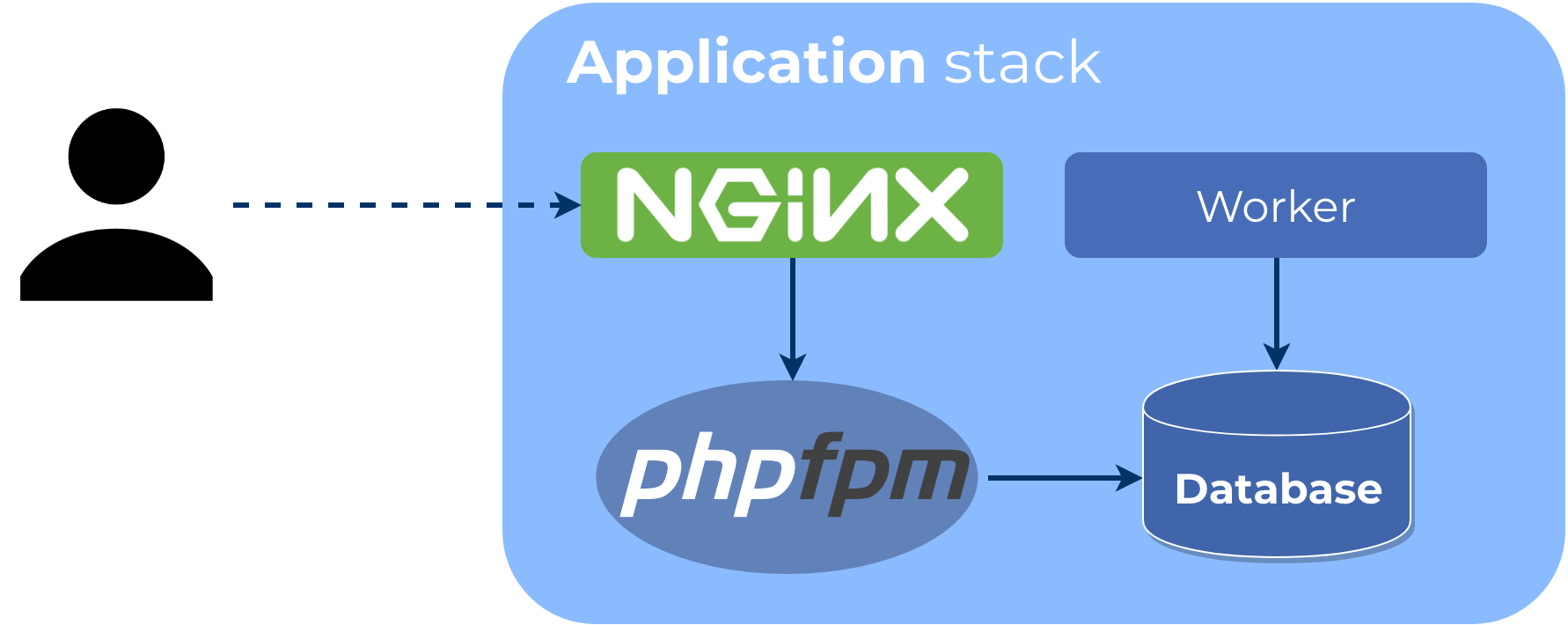

Application stack

Over the years, I became a drawing person. Not because I’m good at it, but because visualizing helps understanding the problem and makes the thought process easier to follow.

Based on having some previous knowledge about Symfony, a first drawing could look like this:

As the picture shows, the application environment has four main components:

- PHP-FPM running the application

- Nginx serving the content and communicating with FPM via FastCGI

- A background worker of some sorts

- A database to persist application data

In real life the stack could have much more components (eg. more workers, caches, etc.), but for simplicity we will start with these four.

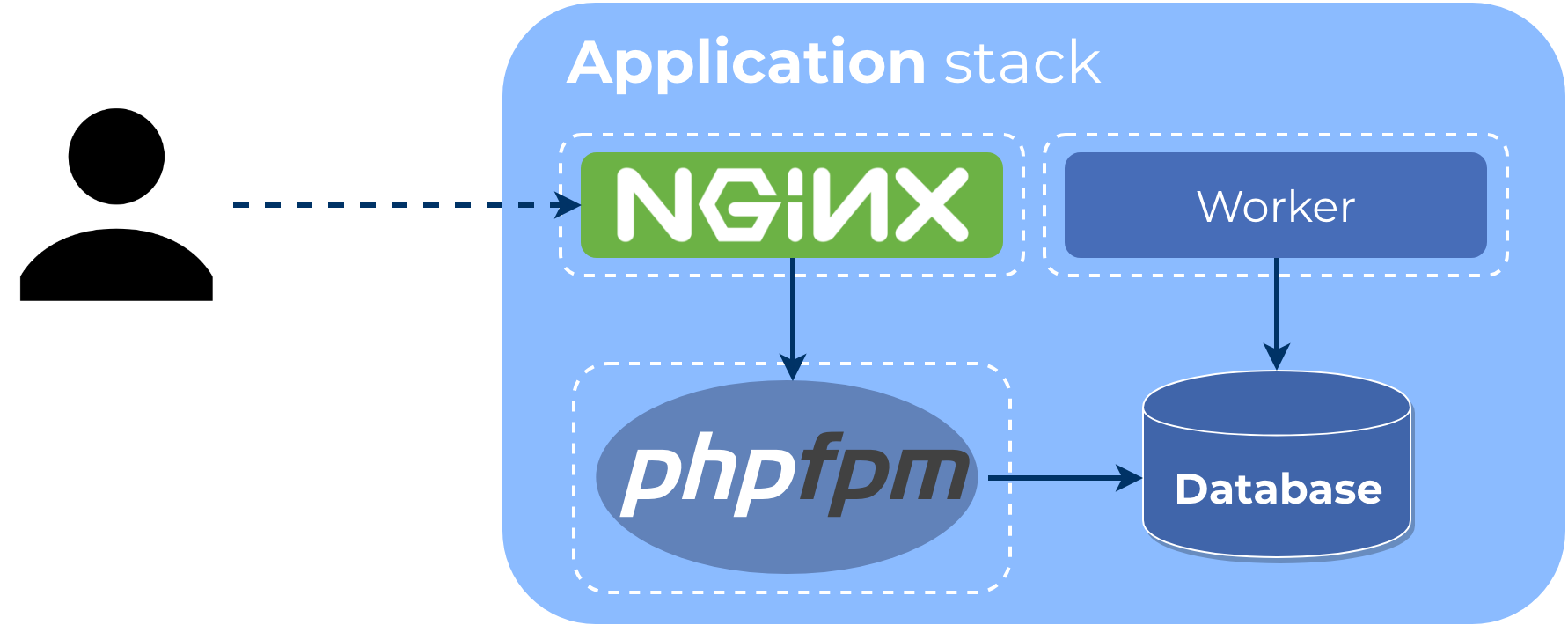

Now let’s add some containers to the picture:

If you search the internet, you can find all kinds of container setups for Symfony, often quite different from the one above:

- Apache with modphp instead of Nginx and FPM

- Nginx and FPM in the same container

- Nginx and FPM AND the worker(s) in the same container (often combined with Supervisor)

While these are certainly working setups, I prefer Nginx over Apache as a web server. Also, in container environments there is a common (I’d even say best) practice putting each process into separate containers. Following these preferences and guidelines, the above setup seems to be the optimal one.

(Note that these are containers we are talking about, not images.)

You might have noticed I didn’t put the database in a container. While running databases in containers is perfectly valid in development (or even preview/test) environments, I prefer using managed services in production. Almost all cloud providers (even Digital Ocean now) offer managed DB services which in the end will be much cheaper (in terms of direct and indirect costs) than operating your own database cluster.

Application image

Let’s move onto creating our first container image. This image will be used to start both the FPM and the Worker containers, since they share the codebase and the runtime environment.

(Remember: we are switching from containers to images.)

In order to ensure that the final image does not contain any unnecessary junk or build time dependency,

we are going to create the image using a multi-stage Dockerfile.

First stage: FPM base image

The first stage is the FPM base image, because this has the least chance to change and we want to leverage build caching.

FROM php:7.3.3-fpm-alpine as base

WORKDIR /var/www

# Override Docker configuration: listen on Unix socket instead of TCP

RUN sed -i "s|listen = 9000|listen = /var/run/php/fpm.sock\nlisten.mode = 0666|" /usr/local/etc/php-fpm.d/zz-docker.conf

# Install dependencies

RUN set -xe \

&& apk add --no-cache bash icu-dev \

&& docker-php-ext-install pdo pdo_mysql intl pcntl

CMD ["php-fpm"]Pro tip: Use exact versions for base images. Upgrading becomes manual, but it ensures that you use the same version everywhere.

This stage is relatively simple: we set the workdir to the desired (web server) docroot, install some dependencies and configure FPM to be the default command.

The only strange part is this configuration override to make FPM listen on a Unix socket. In a traditional setup, Nginx and FPM would communicate using a Unix socket, but in a container setup they are in separate containers (remember the one process, one container rule) without a shared filesystem. This is why the official FPM image comes with TCP enabled instead, which is somewhat understandable.

But it’s absolutely possible to share files between two containers: using volumes. So instead of using TCP, we change the configuration back to a Unix socket, which we will mount in the Nginx container.

Second stage: Composer

Moving onto the next stage: installing dependencies using Composer.

FROM composer:1.8.4 as composer

RUN rm -rf /var/www && mkdir /var/www

WORKDIR /var/www

COPY composer.* /var/www/

ARG APP_ENV=prod

RUN set -xe \

&& if [ "$APP_ENV" = "prod" ]; then export ARGS="--no-dev"; fi \

&& composer install --prefer-dist --no-scripts --no-progress --no-suggest --no-interaction $ARGS

COPY . /var/www

RUN composer dump-autoload --classmap-authoritativeThere are two things worth mentioning in this stage:

- The

APP_ENVbuild argument controls whether development dependencies are installed. This will be useful in the next stage. - In order to generate authoritative classmap for autoloading we copy everything to the image in this stage.

Pro tip: Lock the PHP version to the one used in your production environment in Composer’s platform config to make sure dependencies are resolved for the right PHP version.

Other than these, this stage and the Composer installation is pretty standard.

Final stage: Application image

The last stage of the build is also quite simple.

FROM base

ARG APP_ENV=prod

ARG APP_DEBUG=0

ENV APP_ENV $APP_ENV

ENV APP_DEBUG $APP_DEBUG

COPY --from=composer /var/www/ /var/www/

# Memory limit increase is required by the dev image

RUN php -d memory_limit=256M bin/console cache:clear

RUN bin/console assets:installWe can see the APP_ENV build argument again. In this case it will be the Symfony environment used to build and run the application.

All cache warmup and basically every process, that changes anything in the container has to happen here,

so that the running container starts quickly and runs the same way every time.

By changing the APP_ENV variable we can build separate development and production images.

Development images are useful in preview environments for debugging.

There is one more thing required for building the image (besides the application itself): a .dockerignore file.

Much like .gitignore, it controls what should be left out from the image.

This is useful when you have statements like COPY . /whatever in your Dockerfile, which is true in our case.

So before hitting the docker build command, place the following in your project root in a file called .dockerignore:

*

!/bin/

!/config/

!/public/

!/src/

!/templates/

!/translations/

!/.env

!/composer.*

!/symfony.lockAs you can see, it looks pretty much the same as a .gitignore file and it works similarly as well.

In our case we start by excluding everything from the image and add exceptions for files we need.

This way you can ensure that only those files are copied to the final image which are neccessary to run the application.

If you need additional files/folders for the application to run, make sure to add them to the ignore file, otherwise you might see errors like this:

Step 22/23 : COPY docker/nginx/default.conf /etc/nginx/conf.d/default.conf

COPY failed: stat /var/lib/docker/tmp/docker-builder152684387/docker/nginx/default.conf: no such file or directory

You can go ahead now and build the production image:

docker build -t symfony-app:local .

# OR build a development image

docker build -t symfony-app:local-dev --build-arg APP_ENV=dev .Web server image

Most of the guides and posts on the internet will tell you that Dockerfiles should be self-contained,

meaning that you should be able to build the image by just having the code and the Dockerfile itself.

Sadly in our case that would mean having to duplicate most of the instructions in the application Dockerfile,

because the assets that are served from the public/ directory have to be built as well.

While doing that would certainly not be wrong, I’m going to show you another solution which requires considerably less maintenance overhead and will result in faster CI build times.

You might have noticed that the asset building is already part of the application image. It’s not there by accident, we are going to copy the asset files from the already built application image into the web server image. Here is how:

ARG ASSET_IMAGE

FROM ${ASSET_IMAGE} AS assets

FROM nginx:1.15.9-alpine

COPY docker/nginx/default.conf /etc/nginx/conf.d/default.conf

COPY --from=assets /var/www/public /var/www/publicThe above Dockerfile will expect a build argument called ASSET_IMAGE which is the name of the application image

we’ve just built. The image will be mounted as a build stage (so this is again a multistage build),

and the final stage will copy the required files from the first stage.

Paste the above lines into a Dockerfile (eg. Dockerfile.nginx).

Before proceeding to the image build, there is one more thing we have to do: configure nginx. The following configuration is based on the minimal example in the Symfony documentation:

# Based on https://symfony.com/doc/current/setup/web_server_configuration.html#nginx

server {

listen 80 default_server;

server_name localhost;

root /var/www/public;

location / {

# try to serve file directly, fallback to index.php

try_files $uri /index.php$is_args$args;

}

location ~ ^/index\.php(/|$) {

fastcgi_pass unix:/var/run/php/fpm.sock;

fastcgi_split_path_info ^(.+\.php)(/.*)$;

include fastcgi_params;

# When you are using symlinks to link the document root to the

# current version of your application, you should pass the real

# application path instead of the path to the symlink to PHP

# FPM.

# Otherwise, PHP's OPcache may not properly detect changes to

# your PHP files (see https://github.com/zendtech/ZendOptimizerPlus/issues/126

# for more information).

fastcgi_param SCRIPT_FILENAME $realpath_root$fastcgi_script_name;

fastcgi_param DOCUMENT_ROOT $realpath_root;

# Prevents URIs that include the front controller. This will 404:

# http://domain.tld/index.php/some-path

# Remove the internal directive to allow URIs like this

internal;

}

# return 404 for all other php files not matching the front controller

# this prevents access to other php files you don't want to be accessible.

location ~ \.php$ {

return 404;

}

# Turn off logging for favicons and robots.txt

location ~ ^/android-chrome-|^/apple-touch-|^/browserconfig.xml$|^/coast-|^/favicon.ico$|^/favicon-|^/firefox_app_|^/manifest.json$|^/manifest.webapp$|^/mstile-|^/open-graph.png$|^/twitter.png$|^/yandex- {

log_not_found off;

access_log off;

}

location = /robots.txt {

log_not_found off;

access_log off;

}

}After pasting the above lines into docker/nginx/default.conf and adding !/docker/ to the .dockerignore file,

you can go ahead and build the image:

docker build -t symfony-web:local --build-arg ASSET_IMAGE=symfony-app:local .

# OR build a development image

docker build -t symfony-web:local-dev --build-arg ASSET_IMAGE=symfony-app:local-dev .Setting up Docker Compose

It’s time to test our containerized application. It would be easy at this poing to just start the containers manually, but we can probably use the test environment in the future too, so using Docker Compose seems like an obvious choice. For now, let’s just stick to the web and the application containers:

version: "3.7"

services:

app:

image: symfony-app:local

volumes:

- phpsocket:/var/run/php

web:

image: symfony-web:local

ports:

- 8080:80

volumes:

- phpsocket:/var/run/php

depends_on:

- app

volumes:

phpsocket:Notice the volume mount, that shares the unix socket between the container.

Save the above snippet somewhere as docker-compose.yml and execute the following:

docker-compose up -dIf everything went well, you should see a 404 page (since we are in prod env and we haven’t added any controllers). You can try to modify the compose file to use the development images, the Symfony welcome page should greet you.

Conclusion

Containerizing Symfony applications is relatively easy and not that different from any traditional setups, but much more portable and scalable. The provided Docker Compose setup is already capable of serving as a development/test environment. Adding additional components (database, worker, etc) to it is trivial.

Both the example application and the Docker Compose setup can be found on Gitlab.

In the next posts coming I will automate building the container images, set up a simple Kubernetes cluster and configure the build pipeline to automatically deploy the application.